Low-Code Series - Part 3. Intervening in the workflow and expanding the solution

The preceding second part of this Low-Code series described how data is processed. In this third part, the solution created based on the Low-Code platform DWKit is extended with custom functions. Special emphasis is placed on the server components written in C#. Fig. 1 shows the solutions architecture. The front-end can be a website or a React Native app. The metadata - form definition, data definitions, etc. -, the required data (Data Controller) as well as information about the current workflow are retrieved via controllers. Alternatively, data from the form inputs are used.

Fig. 1: Architecture of the solutionData controllers and workflow controllers access internal components whose source code can be purchased. However, this is usually not necessary because the controllers already provide a lot of room to build in their own logic.

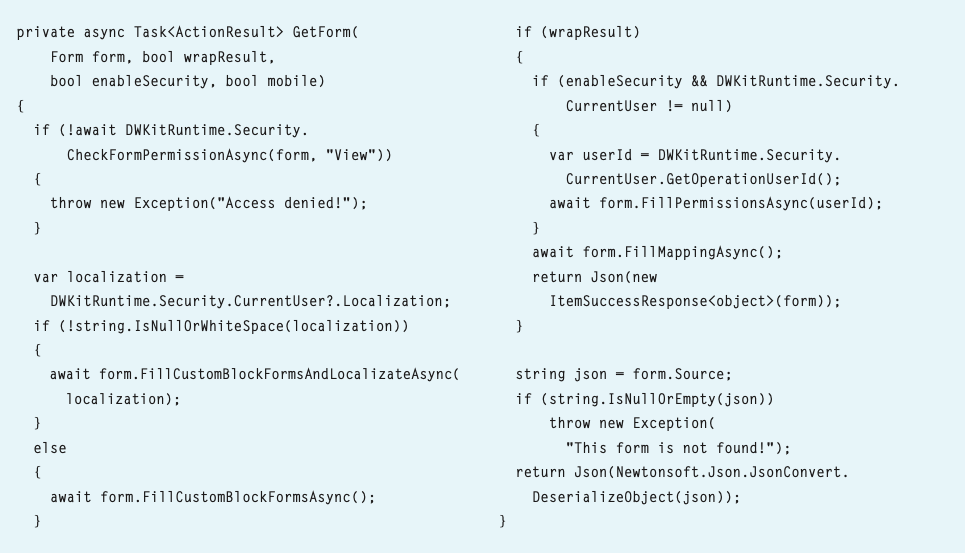

Listing 1 has the code for returning a form design including its contents. First, the permission is checked, then the language is adapted if necessary. FillMappings load the data mapped in the form.

Listing 1: Return form design with contentThe metadata of the forms is stored in JSON files in a directory on the server, so that if the interface is modified, a simple copy-and-paste deployment is sufficient to make an update in the productive system.

If the frontend is a React Native app, i.e. a native app for iOS or Android, the form can be changed on the server-side without having to resubmit the app. Thus, continuous deployment is a walk in the park. The metadata describes the forms, but not the functions. There are two controllers for this, which are in a separate project and therefore in a separate DLL: the FormActionController and the WorkflowActionController. Functions that can be called in the client are registered in the FormActionController. They are available in the event handler as a list of functions. When a function is called, the complete state of the form - i.e. the React state - is passed as a parameter. This allows all form values to be read as well as much additional information such as transfer parameters that can be defined in the call. Not only can they be read, but they can also be changed.

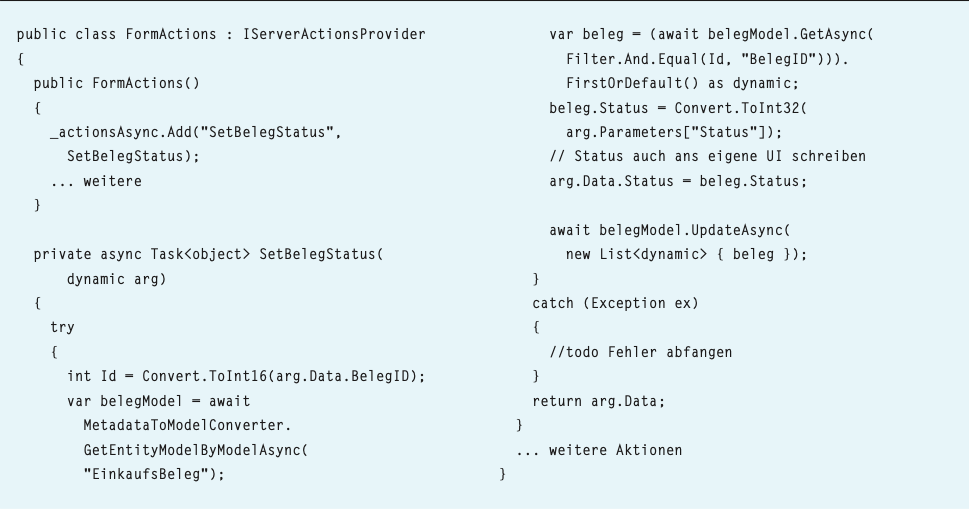

Take a closer look at the code in Listing 2. The form data - i.e. the fields of the underlying record, in this case, the request for a goods receipt document - are passed as arg.Data. In the PurchaseReceipt table, the status of the receipt is to be set to the value that comes from the client.

Listing 2: Set receipt statusFirst, the DocumentID is needed. args.Data is dynamic, so IntelliSense does not work. You have to know exactly how the property is written. Case-sensitive! For people with advanced age (like me), this means that the debugger has to be terminated frequently and the spelling corrected. A simple edit-and-continue fails, the code has to be completely recompiled again and again. This is the price one has to pay for the freedom and flexibility of the solution.

The PurchaseReceipt table must be defined in the metadata. Only then does the MetaDataModellConverter return a repository with functions for reading and saving records. The corresponding receipt is fetched from the database with a filter on the ReceiptID, then the state is changed and the record is written back into the database.

Changing data in args affects the UI. React connects its state with the returned state and updates the fields accordingly. In this case, the state field is changed.

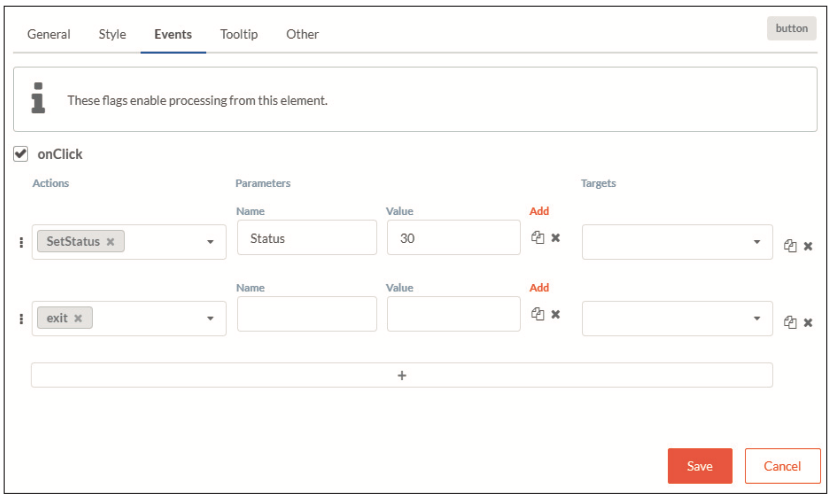

In Fig. 2 you can see how the function is called: All functions of the FormActionController are available in the event handler of the button control onClick.

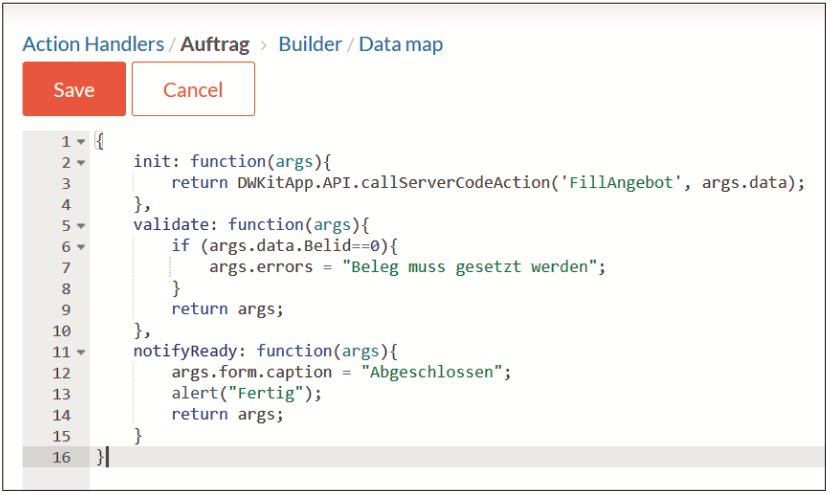

Fig. 2: onClick: Event handler of the button controlThere are two other ways to write functions for the onClick handler: Once directly in JavaScript code in the form - compare Fig. 3. Here, the provided functions such as init and validate can also be overwritten to implement your own validation logic or initialisation sequences. Several functions can be called one after the other. If an error occurs, further processing is terminated. Fig. 3 also shows that parameters can be passed. These can be fixed numbers or variables from the workflow (for example @WorkflowState) or the data record (for example {DocumentId}). But you get these in arg.Data anyway.

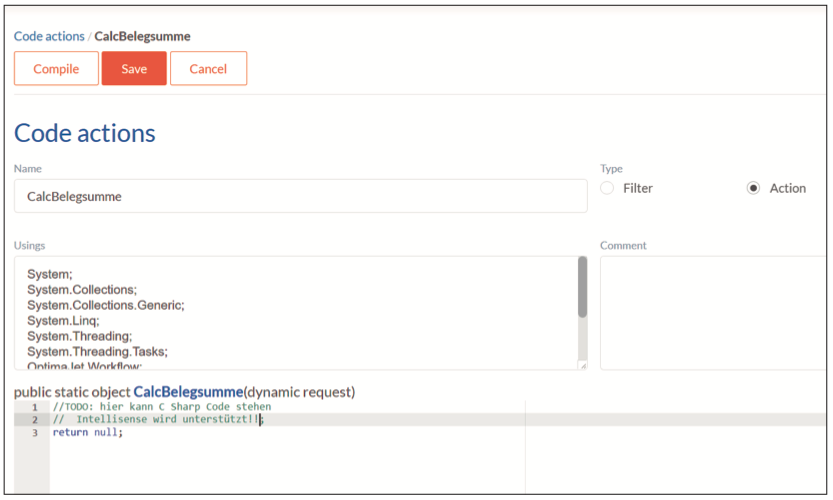

Fig. 3: Coding form actions in JavaScriptThe second option is to write C# code that is available across applications. In Fig. 4 you can see that the Using statements can be completed and thus IntelliSense is also available.

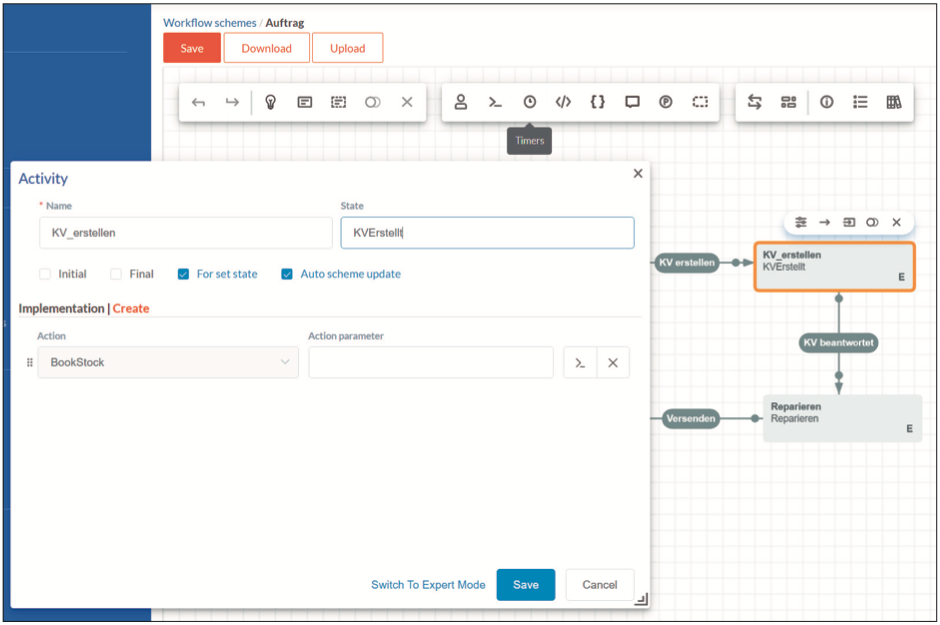

Fig. 4: C#-Using-Statements can be extended, Intellisense is availableIn addition to extending forms, it is also useful to execute actions when certain workflow states are reached. Whenever this state is reached, the code is executed. This can quite possibly happen several times, for example, if an action is repeatedly rejected and then immediately requested again. You should pay attention to this!

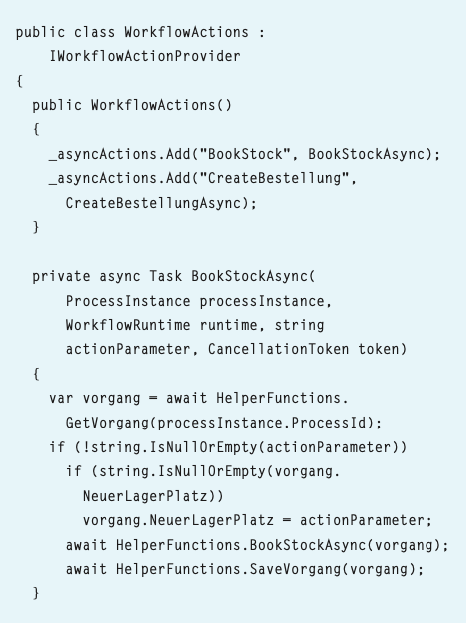

These functions are defined in the WorkflowActionController. You can see an excerpt of them in Listing 3. As with the FormActionController, the functions are registered in the constructor.

Listing 3: WorkflowActions (excerpt)The instance of the workflow, the current runtime, any optional parameters along with a cancellation token are then passed in the method signature. The instance of the workflow is important because the underlying data has the same ID as the process instance.

As a reminder, a workflow is usually started by filling out a form with an underlying table and then clicking Start. Now a new entry is made in the table, for example, a new order is created and a primary key (GUID) is assigned. The workflow is started with the same GUID, so that record and workflow can always be matched.

The corresponding data record is retrieved from the metadata with the same ID in a help function (see Listing 2).

For example, if a parameter was passed in this step, then the new storage location of the task is overwritten with this value and a storage booking is executed each time this step is reached. In the project where FormsController and WorkflowActionController are located, there are further classes to include your own logic. Triggers are an example of this,

see Fig. 5.

Fig. 5: Include trigger and further logicIn the form, it can be defined whether a special trigger is activated when the data set is changed. Example: If the flag urgent is set in the order, this flag should also be set in all operations that belong to this order. The corresponding code can be found in Listing 4.

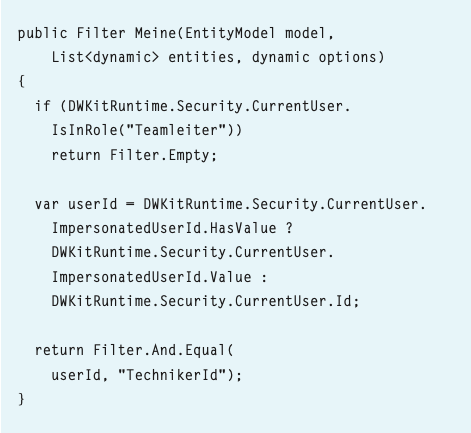

One option is to define special filters on the server-side. In a form, for example, only those tasks should be displayed that have registered the logged-in user as the responsible technician. Team leaders, on the other hand, should be able to see the tasks of all technicians. This can be done using a filter defined on the server and integrated into the client (Listing 5).

Listing 4: Trigger

Listing 4: Trigger

Listing 5: Include filter

Listing 5: Include filter

Conclusion

With low-code approaches, there must be no show stopper at the end: It must be possible to implement even complex functions with it. Ideally, they should be defined and then called and parameterised by consultants depending on the use case. This is possible by using the low-code platform DWKit. JavaScript functions can be inserted at any point in the client as well. The framework is hence a powerful and flexible tool.

With such tools, it is difficult to test the code. The functions on the server require certain states in the workflow to work and are not easy to test. Experienced developers know that every change requires a complete test of the entire solution. That is the price you pay for flexibility. But the tests don't necessarily have to be done by a developer, consulting can do that as well. And for us developers, that leaves time to read the next article ...

Author: Bernhard Pichler (LinkedIn)

Graduate theologian Bernhard Pichler was the CEO of the software company Informare for 20 years , before he got named Head of development and product management of DPS BS. DPS BS is Germany’s largest software partner for business management software for small and medium-sized businesses.